Introduction

This blog explains how to configure a Docker host installed with HCL VersionVault and Docker that provides Docker Container with Dynamic View Client Access. It used to be tedious for developers to configure their environments for different versions of software on a single machine. Now, however, they can create an isolated environment called “docker container” for each version of the app, where each container holds a separate environment configuration that doesn’t affect the configurations of the OS or any other container running concurrently on the developer machine. In order to provide this flexible environment for users, HCL VersionVault has been enhanced to support Docker.

| Si No: | Topic |

| 1 | Prerequisites for the Integration of VersionVault and Docker Containers |

| 2 | Installing VersionVault and Docker on Linux Host |

| 3 | Installing NFS Server and Linux Automounter on Docker Host |

| 4 | VOB and View Creation on Docker Host |

| 5 | Sample Docker Container – Container with Dynamic View Client Access |

| 6 | Final Integration in Action |

1. Prerequisites for the Integration of VersionVault and Docker Containers

We need the four things listed below to be installed, up and running on the Linux host:

- HCL VersionVault version 2.0.1 or later

- Docker

- NFS service

- Automounter service

2. Installing VersionVault and Docker on Linux Host

2.1. VersionVault Installation

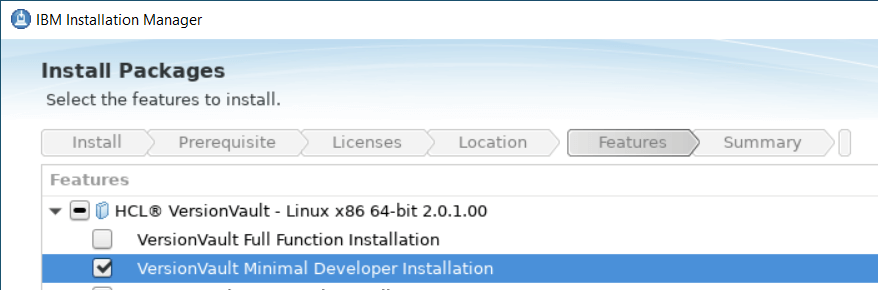

- To share the MVFS and MVFS device, the Docker host should minimally have VersionVault 2.0.1 installed and configured to run as a VersionVault dynamic view client.

- On the Docker host, VersionVault minimal developer installation only is required, since it installs the smallest number of VersionVault components required for a command-line user’s software development workstation.

- The major components included and required to be shared with the Docker container are MVFS and view/VOB server.

- IBM Installation Manager 1.8.6, 1.9.1 and its future fix packs are required to install HCL VV 2.0.1 on the Docker host.

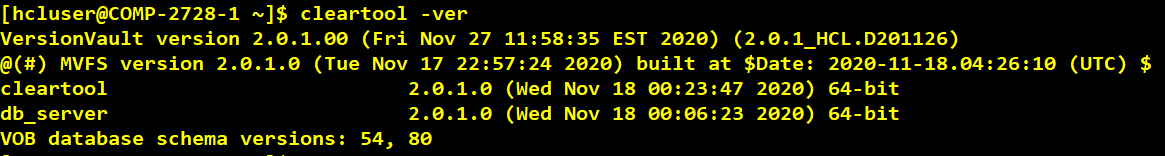

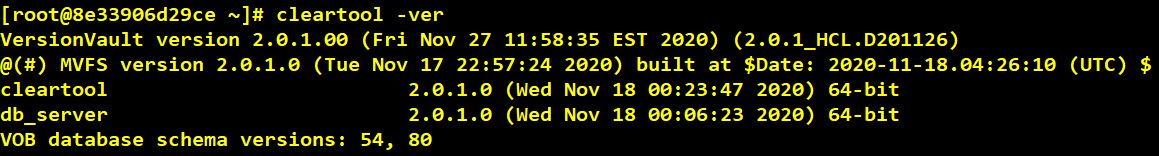

- Below is the screenshot of the “cleartool -version” command after installation of VV 2.0.1.

2.2. Docker Installation

- Any latest version of Docker can be installed on the Linux host with VersionVault 2.0.1. Below is the Docker Engine installation overview link which shares installation steps for different Linux platforms.

https://docs.docker.com/engine/install/

3. Installing NFS Server and Linux Automounter on Docker Host

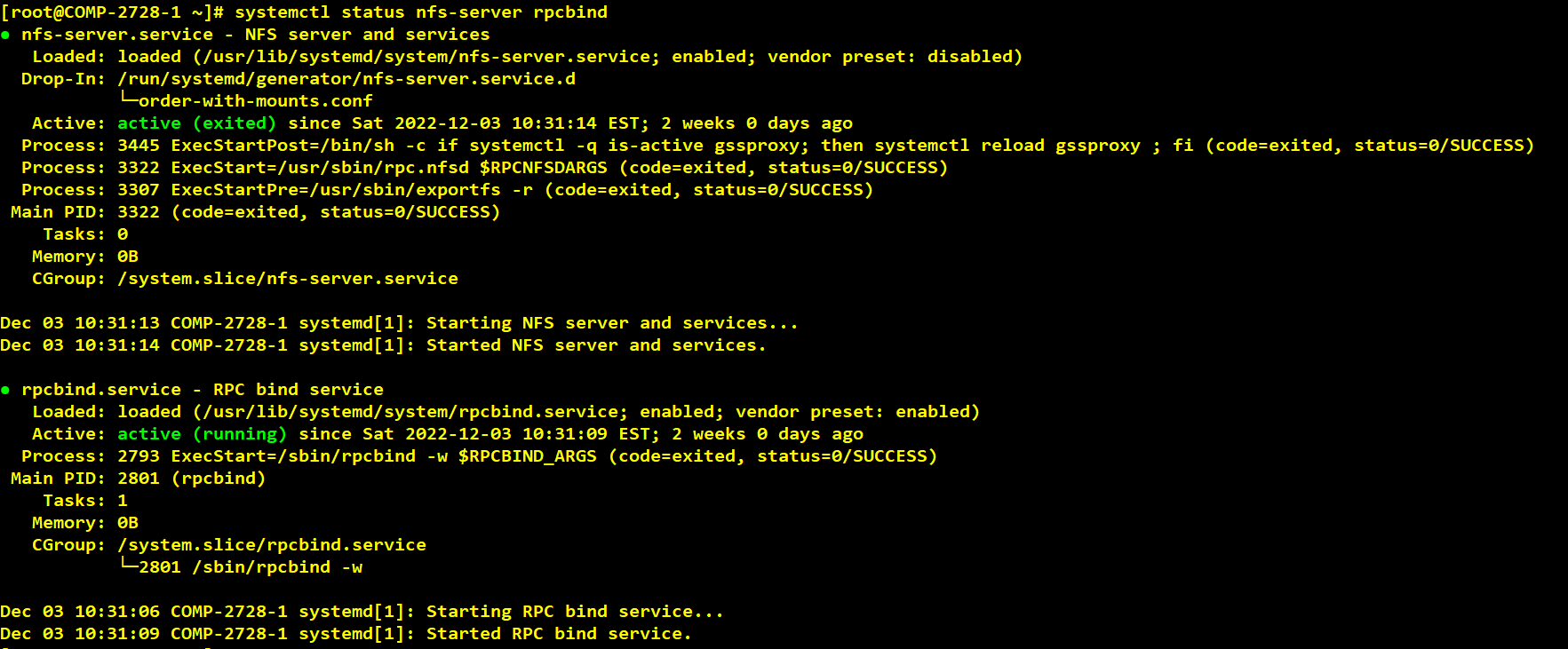

- VersionVault uses NFS and the Linux automounter to access view or VOB storage. The Docker host system must be configured for NFS and the Linux automounter.

3.1. Installing NFS Server

- In this blog post I used RedHat OS. The commands given below will vary depending on the specific Linux distribution used.

Step 1: Update server and set hostname.

Your server should have a static IP address and static hostname that persists reboots.

![]()

Step 2: Install NFS Server.

Next is the installation of the NFS server packages on the Linux host.

![]()

After the installation, start and enable nfs-server service.

![]()

Status should show “running.”

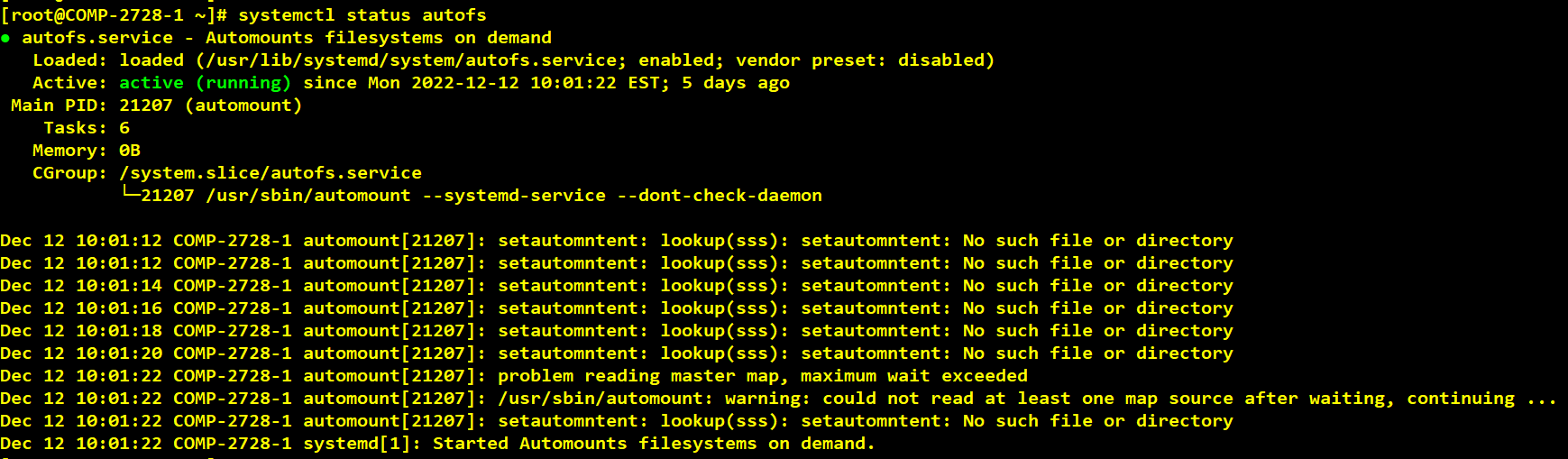

3.2. Installing Linux Automounter

Step 1: Install autofs on the Linux host.

![]()

- When installing the AutoFS package, the installation process will:

- Create multiple configuration files in the /etc directory such as auto.master, auto.net, auto.misc, and so on.

- Create the AutoFS service in systemd.

- Add the “automount” entry to your “nsswitch.conf” file and link it to the “files” source.

Step 2: Right after the installation, make sure the AutoFS service is running with the “systemctl status” command.

Step 3: You can also enable the AutoFS service for it to be run at startup.

![]()

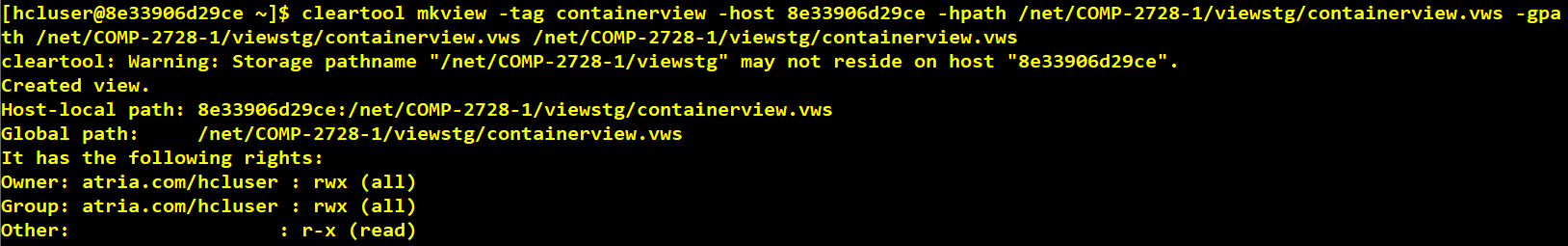

4. VOB and View Creation on Docker Host

- When creating VOBs/Views to be used within the Docker containers, you should specify the options: -host, -hpath, and -gpath. Both hpath and gpath should be the global pathname of the VOB/View storage directory.

For example, suppose you would like to create a VOB/View residing on a machine whose hostname is test_host. Your Docker host and VOB/View server host, test_host, uses the automounter.

To create a VOB/View on the Docker host to be used within a container, you would do the following:

cleartool mkview -tag testview -host dockhost -hpath /net/ dockhost /viewstg/testview.vws -gpath /net/dockhost/user/viewstg/testview.vws /net/dockhost /user/viewstg/testview.vws

cleartool mkvob -tag /vob/testvob -host dockhost -hpath /net/dockhost/vobstg/testvob.vbs – gpath /net/dockhost/user/vobstg/testvob.vbs /net/dockhost/user/vobstg/testvob.vbs

This is required if the VOB/View resides on the Docker host or Docker container.

It is recommended that Docker containers use VOBs/Views external to the Docker container, since both storage and hostname can be temporary depending on how the container is configured.

5. Sample Docker Container

5.1. Docker Container with Dynamic View Client Access

- In this method we will be allowed to create a view inside a container and access the VOB to create a new file/directory element or allowed to modify existing file/directory elements.

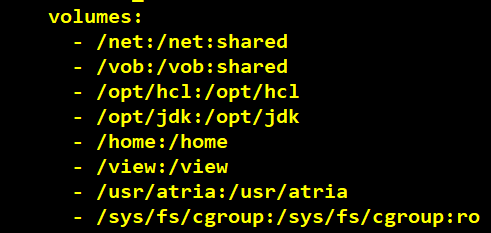

- The Docker build directory consists of Dockerfile, config_ssh.sh, config_dir, and docker_compose.yml.

- The Linux base image must be supported by the VersionVault release running on the Docker host, which should also support the init process. Both RedHat and SUSE provide base images within their registries.

- Linux base images might not contain all the packages required to run VersionVault. The user must update the container to install these packages. Refer to Technotes 535653, 887639, and 718343.

- For more information on the Docker build directory and sample Dockerfile, docker_compose.yml, config_ssh.sh, and config_dir related to Container with Dynamic View Client Access, refer to the link below. In the Resources dropdown in the upper-right corner of the window, look for the link to the article “HCL VersionVault and Docker Containers.“

https://www.hcltechsw.com/versionvault

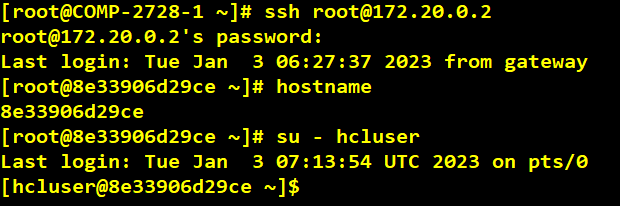

6. Final Integration in Action

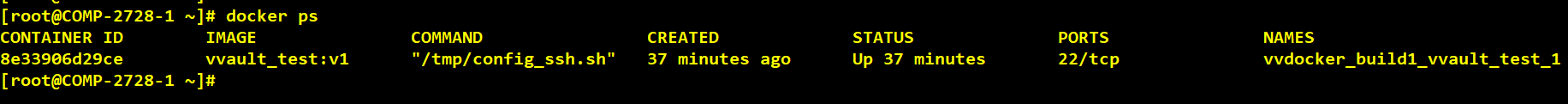

- Once the Dockerfile and docker_compose.yml file are developed with all the required configuration details, you need to navigate into the Docker build directory and perform the command below to build and run the container. The “-d” argument is used to run the container in detachable mode.

![]()

- The command above builds, (re)creates, starts, and attaches to containers for a service. To see the running container, run the command below.

![]()

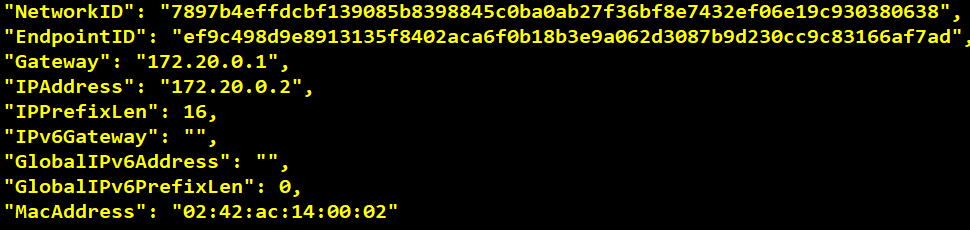

- In order to ssh into the container we need to find out the container’s IP address. To achieve this, we need to run the command below.

![]()

At the end of the command output you will find the IP address. Below is the sample snippet for reference.

- Once the container’s IP address is found, enter the container’s IP and hostname in the Docker host /etc/hosts file. Similarly, after ssh into the container using the account we created during container creation as per the input provided in Dockerfile, add the Docker host IP address and hostname details in the container’s /etc/hosts file.

- In this blog post Dockerfile is customized to create a “root” account during container creation, hence using the same account for container login; you can also create an VOB owner and normal VersionVault user as per your requirement by modifying Dockerfile and making sure the account can perform cleartool commands, create view, and access the VOBs.

- We will switch to VOB owner account “hcluser” that is created inside a container like one that exists in the Docker host hence switching to it after login. The reason for having a specific VersionVault VOB owner account is that it’s not good practice to have a root account or VersionVault administrator group own VOB or VOB objects, as we might end up facing permission issues.

- Inside the container we now can run the “cleartool” command.

The “cleartool” command execution is possible only because the Docker host shares VersionVault binaries with the container as volumes.

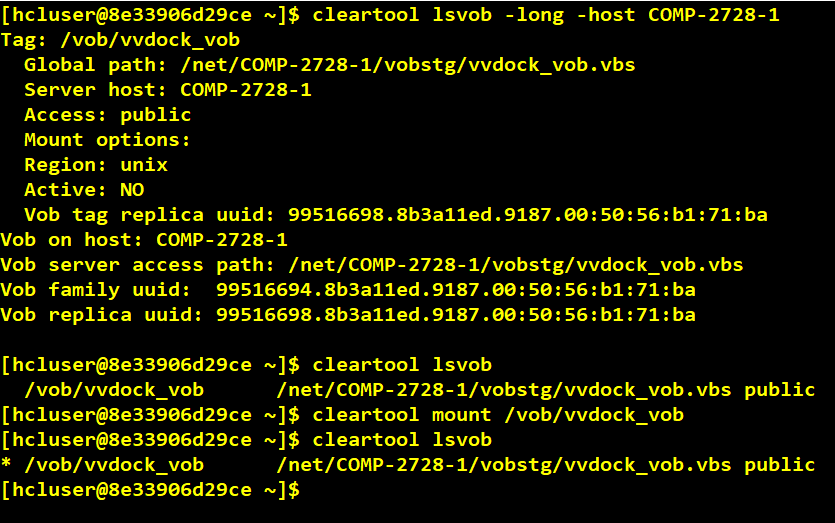

- From the Docker container we can list and describe the VOB, which is hosted in the Docker host.

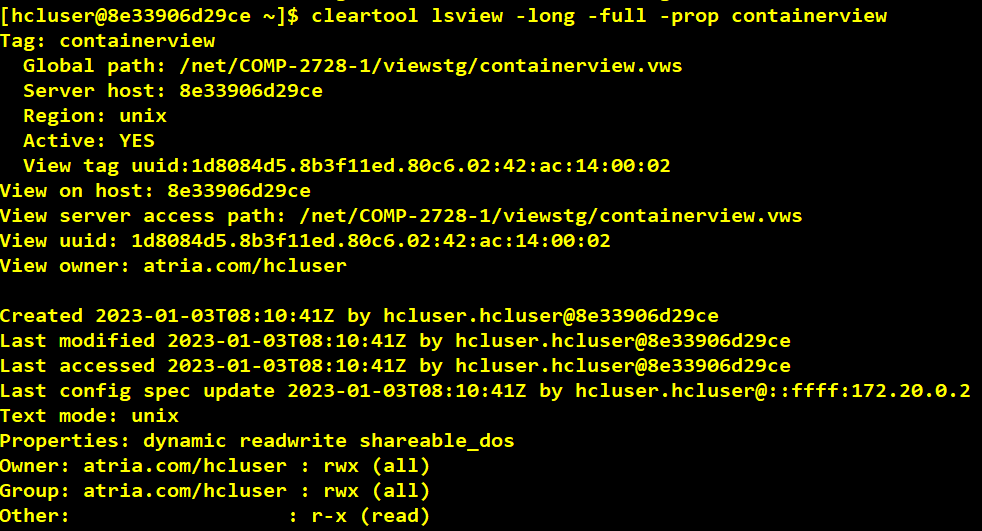

- Creating a view in a container to access the VOB hosted in the Docker host.

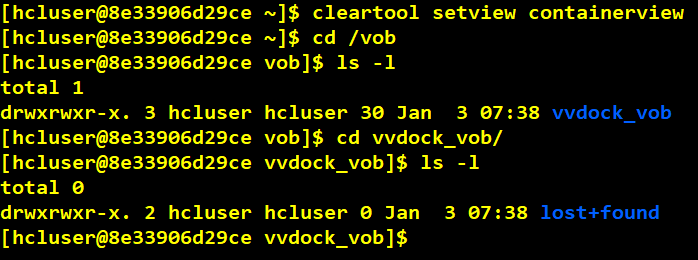

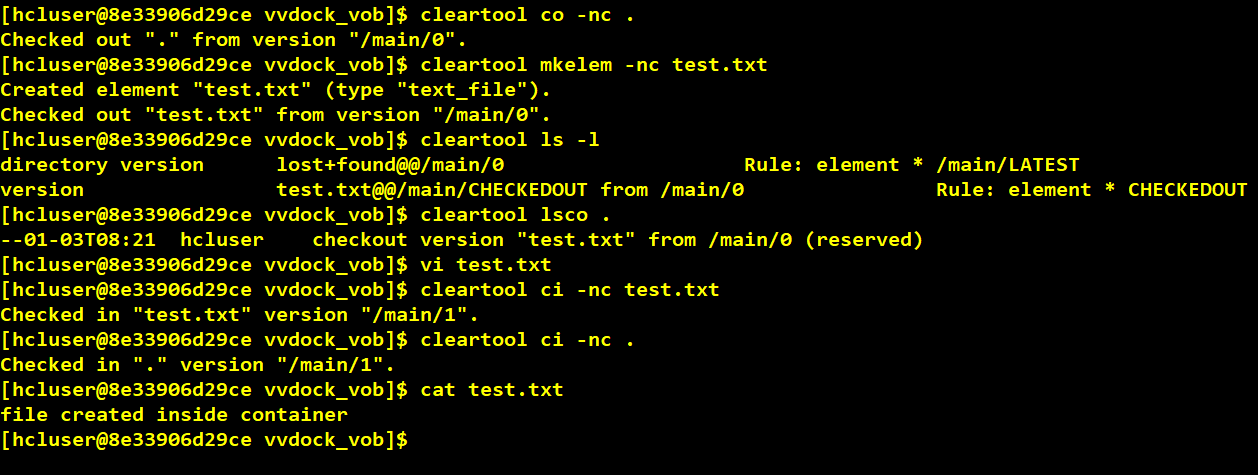

- After view is created in a container set into view to access the VOB.

- Performed checkout and added a new file element under VOB by executing “mkelem.” At the end executed cat command to see the content inside test.txt file.

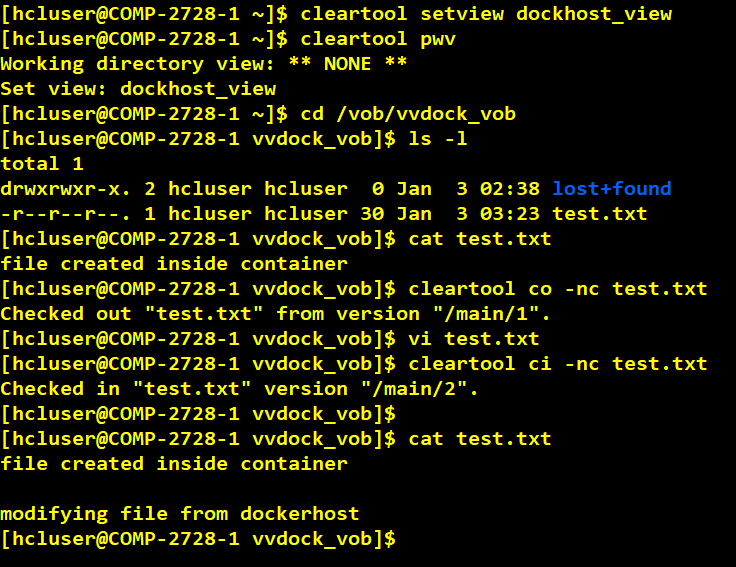

- The file “test.txt” created inside a container can be accessible from Docker host.

Sample Build Execution

- In this section we are going to see how we can perform a build within a container.

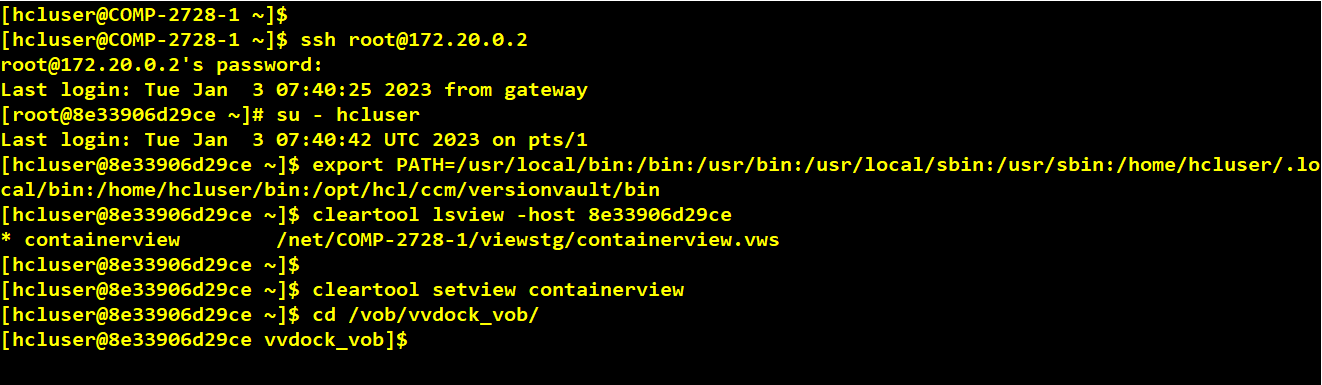

- We will ssh into the container, then switch to VOB owner account and set into view created in container, cd to VOB.

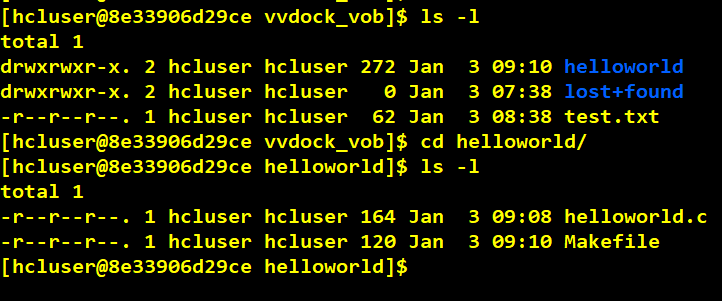

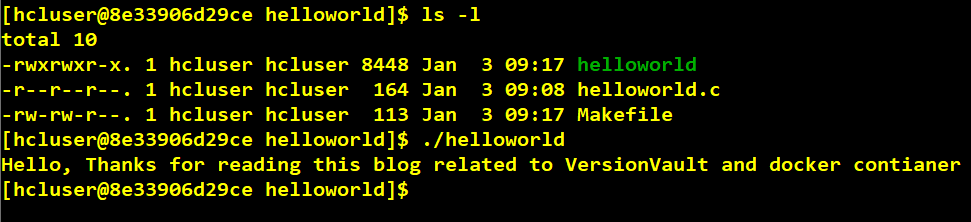

- We can see the “helloworld” directory created under the VOB under which simple C program is placed.

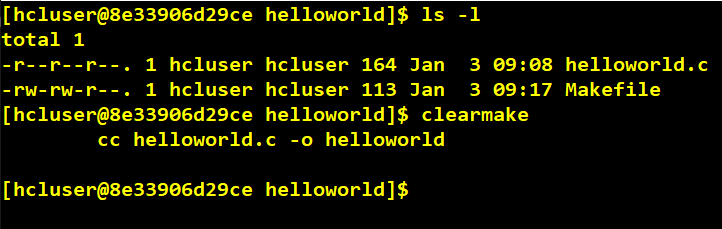

- Now we will run the “clearmake” build utility to compile the simple C code, then run the “helloworld” program to see the output.

- After completion of “clearmake” we can see from the above screenshot that the “helloworld” executable has been created and is ready for execution.

- In this example we just saw a simple clearmake build inside a container and similar way other customized builds can be configured which can be executed inside a Docker container. In this way we can configure different build environments to run within different containers instead of configuring multiple physical build machines for different build environments, which saves cost and time.

- VersionVault can be installed within a container. The installation should be a server installation (not a minimal or full developer installation), since containers should not install and start up the MVFS file system.

Start a Conversation with Us

We’re here to help you find the right solutions and support you in achieving your business goals.