HCL Universal Orchestrator is the first of its kind. Cloud Native Kubernetes Orchestrator comes armed with a leading No-SQL DB Mongo DB, the Market leading Event streaming platform Kafka.

HCL Universal Orchestrator is also a leader in the industry, with Meta Orchestrator breaking ground from Traditional Workload Automation to Cloud Native Kubernetes Orchestration. The new setup provides four to six times the performance improvements compared to traditional Workload Automation, featuring Per Second Granularity on the Workloads, with zero delays between Job Dependencies and Agentless Orchestration. Now, every service can be performed from a Cloud Agent without having to deploy any agents at all.

Problem Statement :

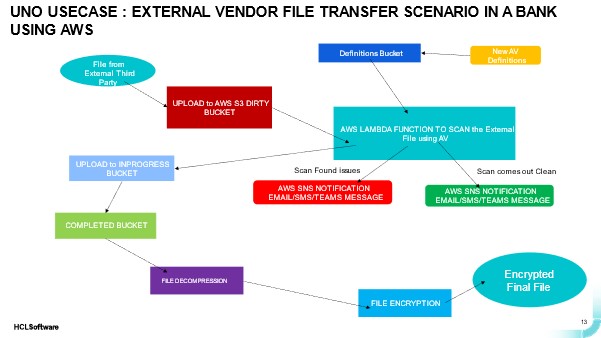

In today’s case study, we’re looking at a bank that wants to sanitize external files arriving from external vendors and other sources and scan them for the latest Anti-Virus Definition Patch before decompressing and decrypting files for use.

Below is what the bank plans to do for each incoming File. The bank leverages AWS as its Primary Hyperscalar and wants to leverage all of its AWS Services in accomplishing this.

- The Company has Multiple Files incoming from External Vendors and there is a need to Park every incoming File into a Dirty Bucket on AWS S3 Service.

- The Parked File is picked and placed in an In-Progress Bucket on AWS S3 Service.

- AV Definitions are uploaded into a Definitions Bucket where New Anti-Virus Definitions of AV are uploaded.

- The File is Sanitized in the In-Progress Bucket by running Scan through an AWS Lambda Function where the file is scanned and Virus Signatures are looked with the latest Set of Anti-Virus Definitions available on the Definitions Bucket.

- The Sanitized File is promoted to the Completed Bucket in case it comes out Clean in the Scan, or else a Notification (Email/SMS/Teams Push Notification) is sent through the AWS SNS Service that the Scan did not come out Clean.

- Also an AWS SNS Notification (Email/SMS/Teams Push Notification) is sent out in case the File comes out clean indicating that the File is good to be processed further.

- Decompression Process is carried out in the resultant file.

- Also the File is decrypted (as it was originally encrypted using PGP Encryption).

Solution :

The Flow when realized in Universal Orchestrator would be comprised of twelve jobs:

- AWS S3 UPLOAD Job to Upload Vendor File into Dirty Bucket.

- AWS S3 Download Job to download Vendor File from Dirty Bucket.

- AWS S3 Upload Job to upload the latest Anti-Virus Definitions into the Definitions Bucket.

- AWS S3 Upload Job to upload Vendor File into In-Progress Bucket.

- AWS Lambda Job to Scan the File using Serverless Code realized as an AWS Lambda Function written in python.

- If the Scan comes out Clean, the AWS SNS Notification Job will notify the user that the Scan has come out clean. Based on the Joblog message, we would invoke the JSONATA Function to parse specific line from the Joblog (Conditional Dependencies employed in the flow) to notify that the Scan has come out clean.

- If the Scan comes out with problems, the AWS SNS Notification Job will notify you that the Scan has come out clean. So, based on the Joblog message, we would invoke the JSONATA Function to parse specific lines from the Joblog (Conditional Dependencies employed in the flow) to notify that the Scan has come out with issues.

- AWS S3 Download Job to download Vendor File from In-Progress Bucket.

- AWS S3 Upload Job to upload into Completed Bucket.

- AWS Download Job to download from Completed Bucket.

- Decompression Job to Decompress the File extracted from Completed Bucket.

- Decryption Job to decrypt the file which is decompressed.

All the above Job Types are available out-of-the-box (OOB) as part of the Universal Orchestrator Bundle.

Summary:

- Whole Flow of 14-15 Jobs completed in a matter of 14 seconds

- Zero Wait time between Jobs

- Agentless Orchestration

- Achieve enormous efficiency gains of up to 50% on Monitoring Time and free up resources for more value-added work

- End to End Monitoring View of all Business Services from a Single Interface

- Track and now where the Flow is at any point of time

- Identify Failures, Delays and Long runners in real time

- Identify cause of failure directly from the Interface and correct and remediate issues

- Automate the Issue Detection and Recovery as well through Recovery Flows and Conditional Branching to automate recovery

- Proactively identify anomalies and delays and keep a Tab on SLA and prevent SLA breaches

- Automate Outage handling and resumption from Application Outages directly over the same interface

- Ease of Operation via Chatbot over Mobile Application, Desktop, Virtual Reality based UI

- Incorporate AI in your workflows and predict forecasts more accurately taking into account historical trends in Job Executions from past runs

Start a Conversation with Us

We’re here to help you find the right solutions and support you in achieving your business goals.