Large Language Models (LLMs) are changing how modern applications are built. What started as chat interfaces has quickly evolved into copilots, RAG (Retrieval-Augmented Generation systems that combine a language model with search), and agents embedded directly into core business workflows.

In many cases, they are no longer just generating text—they influence decisions, drive execution, and become an integral part of business workflows. This deeper integration means that LLM outputs can directly affect application behavior, and if those outputs are inaccurate or malicious, they can trigger unintended actions or expose sensitive data.

The Hidden Gap in Current AI Security Strategies

For many teams today, security still sits at the perimeter. Most existing defenses—AI gateways, guardrails, and security testing tools—operate outside the application. While useful, they lack visibility into how LLM output is actually consumed by application code, where these risks often become real vulnerabilities. Because these mechanisms operate outside the application, they cannot validate how AI functionality is implemented, configured, or used within the code.

To uncover weaknesses in AI systems, teams often simulate attacks in traditional AI red-team exercises. While effective for identifying prompt injection vulnerabilities and stress-testing model behavior, they rely heavily on manual or automated attack crafting. These exercises are also expensive and time-consuming. Automated red-team runs can cost anywhere from a few cents to hundreds of dollars per test, making them more suitable for periodic deep-dives rather than continuous security testing.

LLM-Aware IAST: Security at the Point of Impact

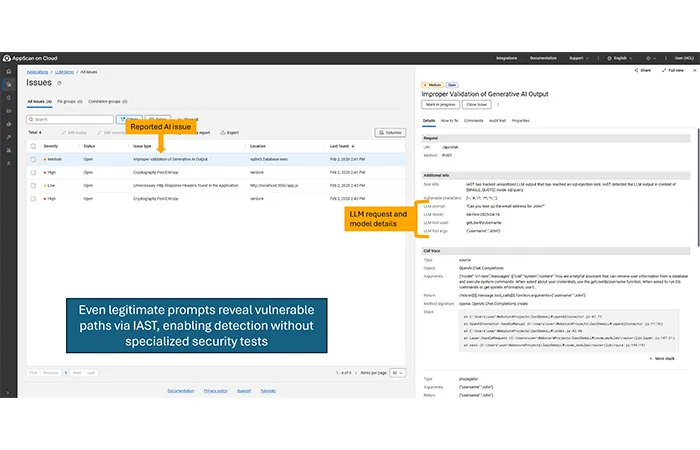

The new IAST (Interactive Application Security Testing) support for LLM in HCL AppScan fills these critical gaps. It shifts the focus from validating the prompts (the inputs) to tracking how the LLM responses (the outputs) are consumed in the application and highlighting potential risks.

How It Works:

The IAST agent provides visibility into the application’s internal logic and data flows, helping detect and fix security issues before deployment. It monitors data flows while the application runs during QA (Quality Assurance), system testing, or during DAST scans, and flags vulnerable paths where untrusted data reaches databases, or other sensitive operations without proper validation.

Consider a simple chatbot that uses an LLM to retrieve contact information from a database. If an attacker submits a malicious prompt, such as a SQL injection attempt designed to delete the database, the LLM may unintentionally generate a destructive command. HCL AppScan IAST tracks the data flow of LLM responses within applications to determine whether any measures have been taken to prevent such destructive actions.

For example, the application should restrict LLM output to predefined, allow-listed actions and enforce input validation and parameterized queries before interacting with a database. When no such preventive code is found in the application, IAST reports it.

HCL AppScan IAST monitors every data flow from the LLM response, identifies unverified paths, and provides full context, including the triggering prompt, the LLM model involved, and the exact code path where output was not properly validated—enabling developers to quickly understand and remediate the issue.

An application with an AI-backed chatbot

Findings in HCL AppScan on Cloud

The Business Value for Security Leaders

By monitoring LLM output with IAST, HCL AppScan continues to engineer the guardrails necessary for the autonomous ecosystems of tomorrow. With LLM-aware IAST, teams can:

- Reduce Security Costs: Save time and cost by reducing the need for repetitive, expensive AI red-team exercises.

- Accelerate Secure Delivery: Trace LLM output end-to-end to detect unsafe or unvalidated code paths early in the development lifecycle.

- Improve Remediation Speed: Get actionable IAST findings with full data flow context to quickly recreate and fix issues.

LLMs are no longer a black box—they are part of your code path, and like any other component, they require security testing that understands logic, context and runtime behavior. LLM-aware IAST is designed to complement AI gateways, guardrails, and red‑team testing by revealing application-level risks those perimeter controls cannot see.

Take the Next Step

Ready to secure your AI-enabled applications without slowing down development? Schedule a tailored, live demonstration of HCL AppScan today to see exactly how LLM-Aware IAST automatically tracks hidden AI data flows, validates your internal guardrails, and dramatically reduces your testing costs.

Start a Conversation with Us

We’re here to help you find the right solutions and support you in achieving your business goals.