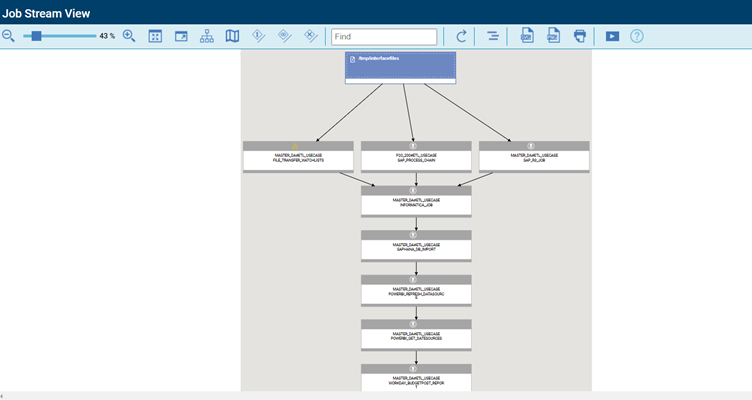

In this blog, we will go through a complete end-to-end use case of data pipeline orchestration.

The scenario works using HCL Workload Automation, where we would have data coming in from multiple source systems. Detection of files and processing of source files could also take place in different systems, including post processing.

The data transformation is done via the data staging/transformation platform, followed by ingestion of the transformed data into the data warehouse. Once data is ingested into the data warehouse, we can run analytics on it through any of the popular analytics platforms.

This can be followed by a query status of the analytics step. We can gather its response and then run reports from the reporting tool.

Customer Requirement

Customers who need to orchestrate their data pipeline end-to-end and have multiple incoming interface files coming from different source systems will benefit from this process. The interface files must be detected in real time upon arrival and would need to be processed in real time in different systems.

The customer uses an SAP as their Main ERP and has an SAP R/3 System to process an incoming interface file with an ABAP program passing a variant and a step user. The customer also needs to have an SAP process chain to be run also as part of the flow to process an incoming file. There’s also a file transfer step which would fetch one of the source files and import into another target.

Once the data processing is done, you’ll need to transform the output generated. You can use Informatica as the data transformation tool. The transformed data also needs to ingested into a data warehouse, like SAP Data Warehouse on the SAP HANA DB.

Here, the customer used PowerBI as the main product to run analytics on top of the ingested data and PowerBI to refresh the data source. The status of the refreshed data source needs to be fetched, and the step must be finished successfully. After the status check, you’ll also need to run reports using Workday Application – which can then be mailed out to the users.

Failure at any step sends out alerts in MS Teams. There’s also an alert on MS Teams if the file transfer step takes longer than 3 minutes during processing.

Solution

HCL Workload Automations orchestrates this data pipeline end-to-end by cutting across multiple applications to run different jobs in the flow.

Workload Automation also detects source files from different source systems in real time while processing them and uses various plugins available within the solution to readily connect to each application.

Running individual steps in real time while also tracking alerts, abends/failures, long runners, and if needed, recovery keeps track through an automated conditional branching. Recovery jobs and/or event rules can also be set up in the automated recovery process.

Detecting Source Files

Source files can be easily detected through event rules. The file is “created” with an action to the job stream in real time or with file dependencies.

SAP R/3 Batch Job

The SAP R/3 batch job consists of running an ABAP program with a variant and a step user.

You can also run printer parameters here, like new spool requests or printer formats, or designate spool recipients, etc. You can also archive the parameters you would find within SM36. These could also be used in multi-step SAP jobs, as well.

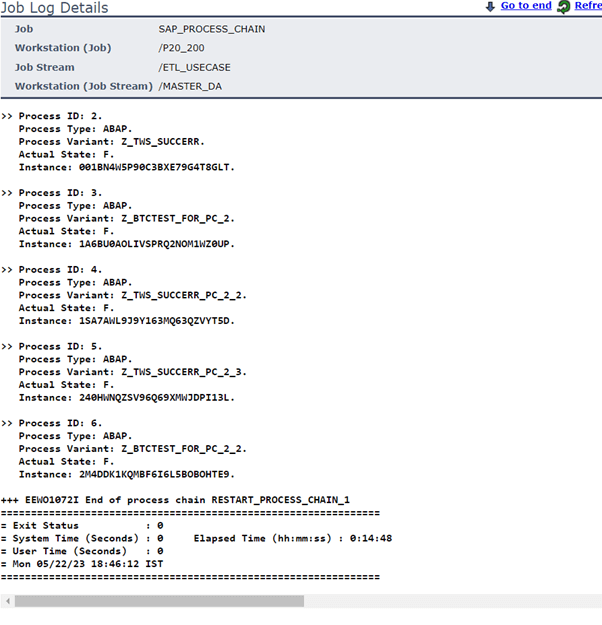

HWA would fetch the SAP job log from within the SAP in real time into the local job log within HWA:

SAP Process Chain Job

An SAP process chain job is to run BW SAP process chains with the option of passing the execution user (also within) to trigger the BW process chain.

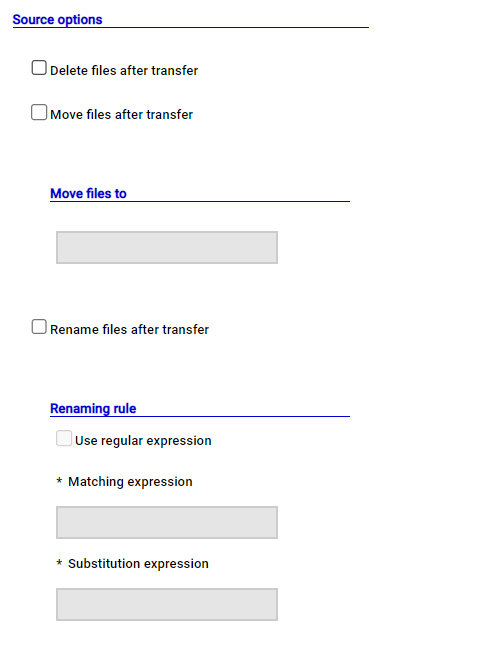

File Transfer Job

A file transfer job can be used to trigger file transfers to and from external third parties or internally between two different servers.

You also have the option of leveraging the A2A (Agent to Agent) protocol to do https transfers from source to destination between agents. You also have options for regular expression mappings, archival at source, mappings, archival at source, archival at destination with date and time stamps, etc.

In this case, the file transfer step runs long and goes past the max duration set to 3 minutes for this particular job.

This results in a notification alert sent to MS Teams via an event rule:

Informatica Job

The data transformation is done via an Informatica step where the Informatica PowerCenter workflow is run from an Informatica folder, which also mentions the service domain, repository domain, and repository name, etc.

The job log depicting the transformation within Informatica looks like this:

SAP HANA Job

An SAP HANA DB Job is used to query the DB or run a stored procedure against an SAP HANA DB. These also mention the SAP HANA NGDBC driver in the job definition. The job would do the DB import into the SAP HANA DB as follows:

Note: To post the import in the database, we could also run Analytics on top of the data ingested into the database.

PowerBI Refresh DataSource

The next step in posting the import into the HANA DB is to refresh a Power BI data source. This can be accomplished through a RESTFUL job within HWA.

A restful job can be used to call a service URL to do a https post (in this case, post) by passing a JSON body. It can be posted in the body of the job either via a file or directly within the body field.

Get the Status of PowerBI Refresh

The status of the previous step is pulled through another RESTFUL job which would do a https GET on the status of the previous step passing the DATASETID and REFRESHID as variables from the previous step.

Workday Reports

Reports are run in the Workday Application by again leveraging a RESTFUL post job by passing the JSON body directly in the body field of the job.

Overall Status of the Data Pipeline

Using the graphical views of the product, it’s easy to track this entire process in real time and get alerted to failures or long runners directly over MS Teams/ticketing tool/email/SMS.

Advantages of Running Data Pipeline Orchestration over HWA

- Any step in the data ingestion, data processing, DB import, analytics or reporting steps can be realized with a variety of plugins. This leverages the overall process and 99+ plugins shipped.

- Initiation of the job flow and realization of dependencies and detection of source files out of the box.

- Tracking of the flow can be done via the Chatbot: CLARA or via the graphical views in the dynamic workload console.

- Alerting can be over MS Teams (or any messaging tool of your choice), ticketing tool, email, or over SMS.

- The entire orchestration achieved end-to-end through HWA.

For more information on HCL Software’s Workload Automation, click here.

Start a Conversation with Us

We’re here to help you find the right solutions and support you in achieving your business goals.